Framework

Welcome to this online platform dedicated to explore the intricate dynamics of societal trust (and its underlying constructs), initiated by international trust expert, designer, internet pionier, innovative thinker, independent researcher and science-based author Erik Schoppen. We invite you to delve into the concealed world underneath societal trust, and its invisible yet tangible role in our networked society. Knowledge that is needed to make social and environmental change more integrated and sustainable. Each trust level in the framework starts by a brief scientific explanation on the subject, followed by his research and design projects conducted and produced through the years, spanning an era of over almost 30 years.

The importance of Trust Perception Research in our Society using a Socio-Ecological Network Model

Trust is a key element in building and maintaining personal relationships (between relatives, friends, co-workers, etc.), interpersonal relationships in groups and organizations (e.g. user groups, teams, employees, producers and stakeholders), and connections within larger ecosystems that users, stakeholders or the public rely on.

These network categories are increasingly overlapping due to prosocial and sustainable (idealistic or business) models with a local or global societal impact, anchored in mutual dependency within these trust relationships.

Trust between individuals contributes to the economic development of companies and even countries.

Without these trust-based collaborations, there is no social and economic trust. And at the societal level, trust can be considered essential in interorganizational relationships within larger systems, as they not only form the basis for social fabric and economic capital, but also ecological value (the level of benefits that natural ecosystems provide to support life forms on this planet).

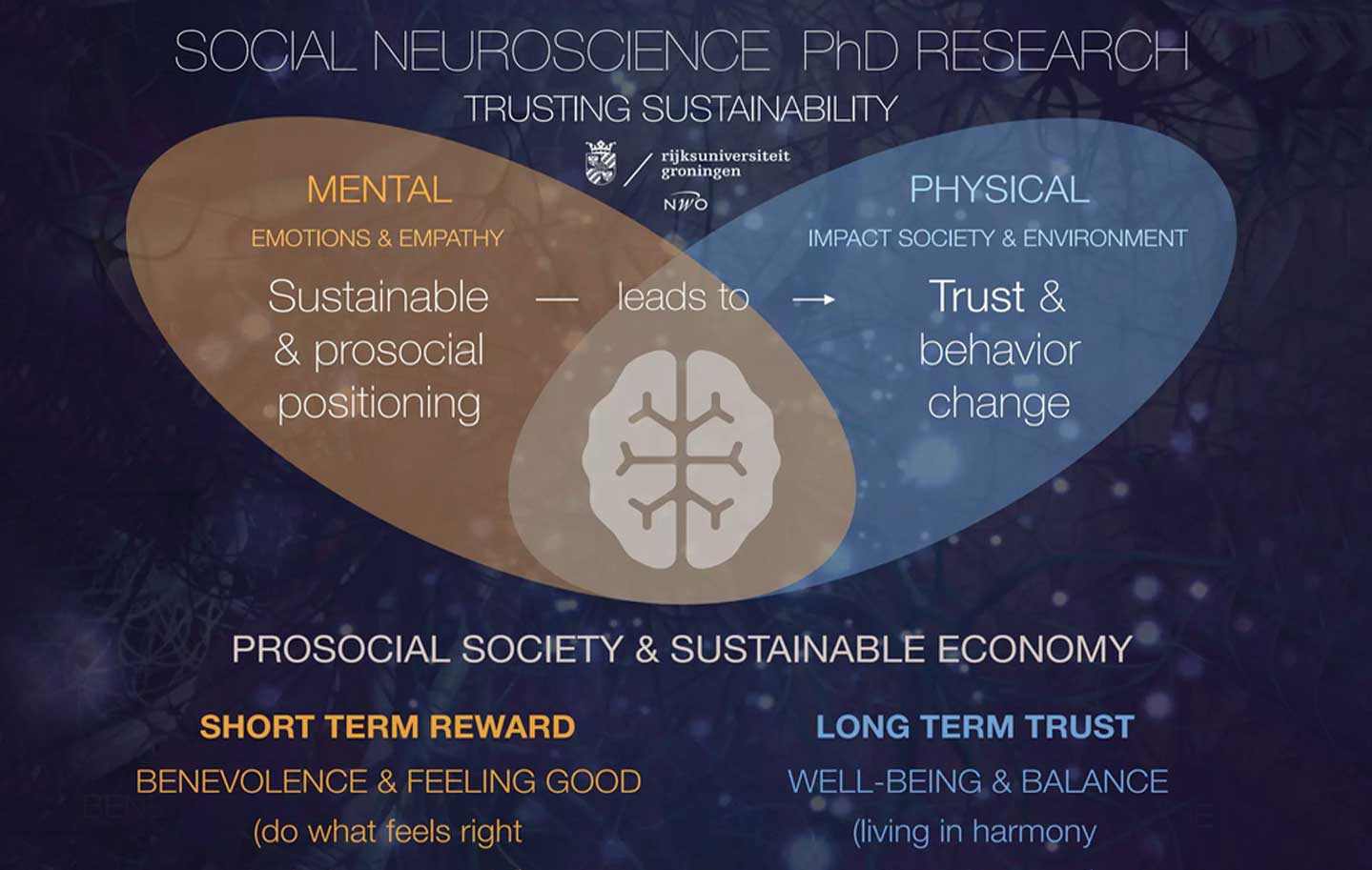

The inner foundation (within the human brain) of the framework is built upon three psychological and neurological trust attitudes: cognitive, affective and conative (behavioral).

The framework was developed and studied by Erik Schoppen1

during his social and behavioral (neuro)scientific research 'Trusting Sustainability' at the University of Groningen (Behavioral and Social Sciences, Cognitive Neuroscience).2

The trust framework was published in Strategic Brand Management (BNL Dutch Edition, 2022, Pearson Education)3

He is considered as a global thought leader in the field of trust and lectures Brand Management, Trust Theory, Design Innovation, Neuroscience, Psychology and Sustainable Behavior at the Hanze University of Applied Sciences, Loughborough University, and other international institutes.

In 2021 he gave a TED Talk4

about his voicing system to rebuild trust in our sustainable future.

Schoppen: "We can think of trust as a multi-dimensional (mental, physical and virtual) construct consisting of expanding network levels that become increasingly complex".*

The framework was developed and studied by Erik Schoppen1

during his social and behavioral (neuro)scientific research 'Trusting Sustainability' at the University of Groningen (Behavioral and Social Sciences, Cognitive Neuroscience).2

The trust framework was published in Strategic Brand Management (BNL Dutch Edition, 2022, Pearson Education)3

He is considered as a global thought leader in the field of trust and lectures Brand Management, Trust Theory, Design Innovation, Neuroscience, Psychology and Sustainable Behavior at the Hanze University of Applied Sciences, Loughborough University, and other international institutes.

In 2021 he gave a TED Talk4

about his voicing system to rebuild trust in our sustainable future.

Schoppen: "We can think of trust as a multi-dimensional (mental, physical and virtual) construct consisting of expanding network levels that become increasingly complex".*

1. Official website

ErikSchoppen.com

2. PhD Programm funded by

Dutch Research Council (NWO)

3. Keller, K.L., Heijenga, R., Schoppen, E., & Swaminathan, V. (2022). Strategisch merkenmanagement 5, Info

www.StrategischMerkenManagement.nl

4. A new system to rebuild trust in our sustainable future | Erik Schoppen | TEDxUWCMaastricht. View on Youtube.com

*also trust attitudes are measured in every network level.

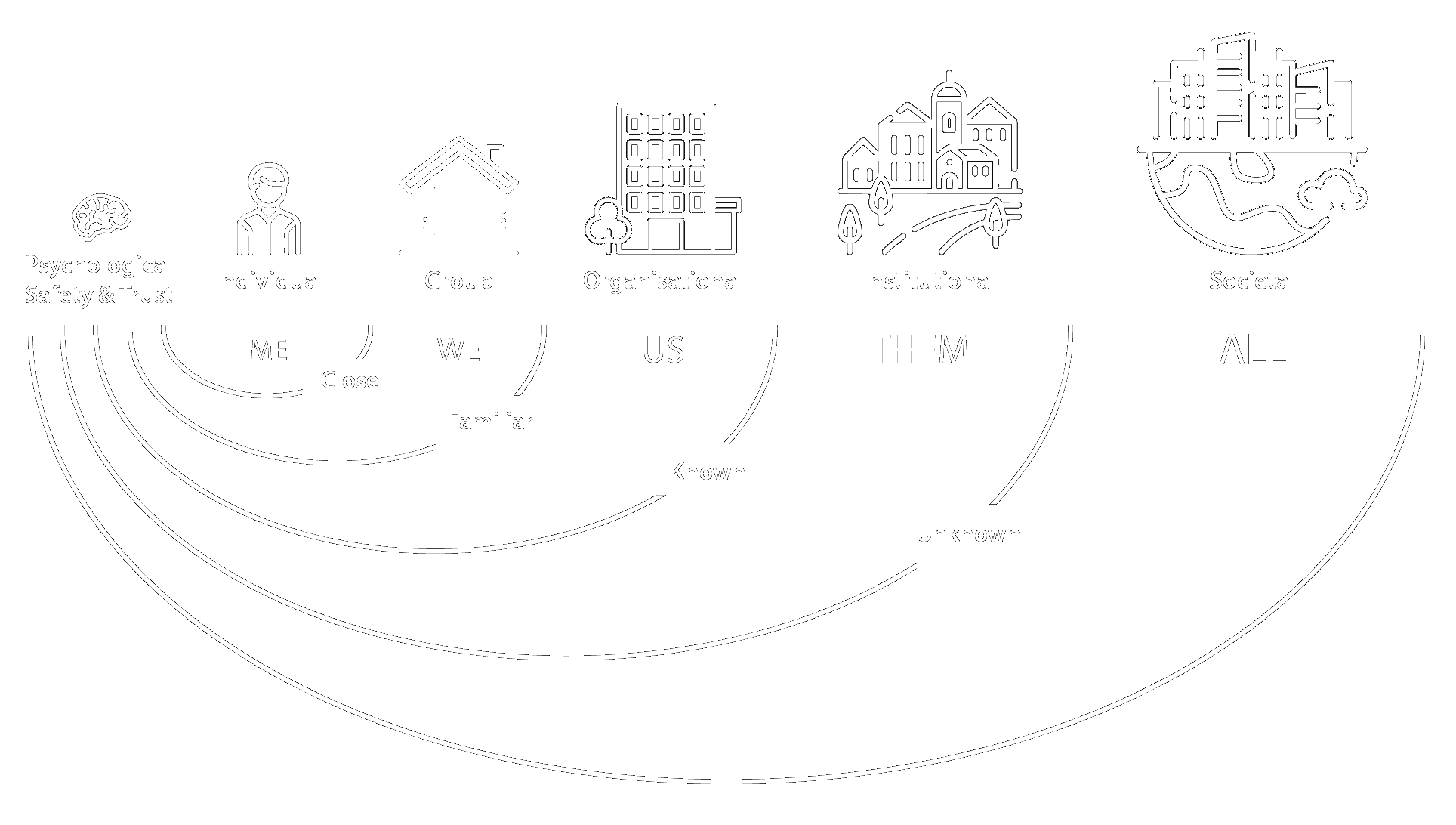

Figure 1. Schoppen, published in Strategic Brand Management, BNL 5th Edition, 2022

How to navigate through the levels

Please use the left menu to scroll to

a particular level or trust attitude.

Figure 2. Schoppen, 2017

Multilevel Trust Framework

One of the key aspects of the levels in the socio-ecological model we discuss here (Figure 1), alongside the hierarchy between the levels, is the integrative networks within the framework. The levels are schematically visualising the relationships and networks between those levels. The framework shows that each higher level increases in complexity in an incremental manner.

Individual (or self) trust refers to one person; social trust to pairs and groups (including teams); organizational trust to teams in an organization, and so forth. Each 'dot' in the next level represents the previous level in which it is embedded. This demonstrates the growing network complexity within each new level. For example, the level of trust between two individuals will partly depend on each individual's level of self-confidence, and the level of trust someone has in a team or in the organization. Organizational trust, in turn, will depend on the trust processes of all individuals comprising an organization. An organization characterized by a lack of social trust among its own employees, users, or other stakeholders is not perceived as reliable. This, in turn, impacts the success and collaborations of the organization at higher levels.

Integrative Trust Networks Increasing in Complexity

The framework is built-up in neural (micro), social (meso) and global (macro) trust networks (as depicted in Figure 2), derived from ecology and systems theory. Increased complexity allows for interplay and feedback loops across all levels. Although in literature circulate different classifications in micro, mesa and macro levels, we use the following breakdown: At the individual level, personal interactions shape micro-level trust, which aggregates into centralised group and organizational trust on a meso-level. The systemic and societal level reflect the broader and decentralised socio-economic trust on a macro-level. The integrative networks have an impact on all trust levels, crucial for open, transparent, connected and thriving socio-economic ecosystems. These concepts are also essential for human trust in AI, and the 'trust' mechanisms that AI uses to process data for its own systems.

Psychological & Neurological Trust

Psychological perspective

Societal trust, from a psychological viewpoint, refers to the collective belief or confidence that individuals within a society have in one another, institutions, and the overall social structure.

It involves the willingness to rely on others, cooperate, and engage in mutually beneficial relationships within the societal framework.

High levels of societal trust are associated with positive psychological well-being, (organised) cooperation, and a sense of belonging within the community.

Figure 3. Schoppen, 2022

The diagram (Figure 3) shows the interaction of individuals with their immediate environment, and in a larger context with society.

In proximity, trust is the belief in the ability and willingness of others to act in one's best interests. It is essential for cooperation and collaboration, which are necessary for communities to survive and thrive. And in a wider perspective, to achieve organisational goals and sustainable development.

Individual: The trust that we have in ourselves and our own abilities. It is the foundation for all other types of trust;

Group: The trust that individuals have in groups. It is often based on our shared values, goals, and norms;

Organisational: The trust that we have in the organization's reputation, and its commitment to safety and ethical practices;

Institutional: The trust that we have in institutional systems, such as governments, courts, or other regulatory bodies;

Societal: The trust that we have in society as a whole, based on shared values, norms, and beliefs (the perceived fairness and justice of our society).

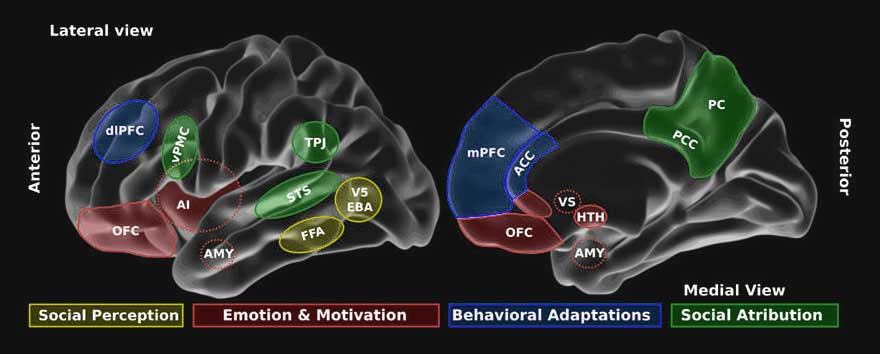

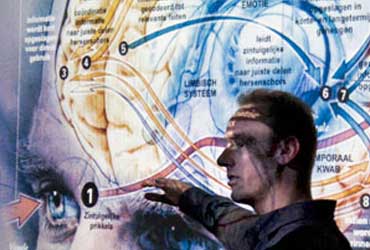

Neurological perspective

From a neurological perspective, societal trust can be defined as the neural and cognitive processes involved in the development and maintenance of trust within social interactions and networks.

It encompasses the intricate interplay between various brain regions and neurotransmitter systems that contribute to the perception, evaluation, and establishment of trust between individuals, groups, and institutions within a society.

So, neuroscience is strongly connected with health & trust sciences as it regulates (neuroreceptor) molecules, cell functions, your behavior, and the physical and psychological impact of (social and environmental) ecosystems on your body and brain.

The literature6

shows that trust involves the activation of specific brain regions, such as the prefrontal and orbitofrontal cortex (frontal lobes), caudate nucleus, as well as the modulation of neurotransmitters/hormones like dopamine (a neurotransmitter that plays a role in pleasure and motivation), and oxytocin and serotonin (both play critical roles in fostering social bonding, cooperation, and the formation of social alliances).

Distrust is associated with the amygdala and insular cortex (temporal lobes).

Understanding societal trust from a neurological perspective offers insights into the neural mechanisms underlying social behavior, decision-making, and the dynamics of interpersonal relationships within a broader societal context.

In essence, the nature of trust is about how the brain and body responds to indvidual thoughts, feelings and behaviors (self), to the interaction with other living species (in groups or communities), and to non-living components (or in ecosystems) in an environment (or specific areas), depending on the conditions in a habitat (in our biosphere).

Figure 4. Billeke, P., & Aboitiz, F. (2013). Social cognition in schizophrenia: from social stimuli processing to social engagement. Frontiers in psychiatry, 4, 4.

Trust is a complex construct that is regulated by a number of different brain regions. Some of the key brain regions involved in social trust include:

Amygdala: This region is responsible for processing emotions, such as fear and anxiety. When we feel threatened or unsafe, the amygdala is activated;

Ventral tegmental area (VTA): This region is involved in processing rewards and motivation. When we trust someone or something, the VTA is activated, releasing neurotransmitters and hormones like dopamine, serotonin or oxytocin;

Prefrontal cortex: Prefrontal cortex: This region is involved in decision-making, behavior and cognitive control. When we are deciding whether or not to trust someone, the prefrontal cortex is activated.

6. Oh, S., Seong, Y., Yi, S., & Park, S. (2022). Investigation of human trust by identifying stimulated brain regions using electroencephalogram. ICT Express, 8(3), 363-370.

Economical & Ecological Trust

Economical perspective

Societal trust, in an economic context, denotes the extent to which individuals and institutions in a society have faith in the integrity, reliability, and stability of economic transactions, contracts, and financial systems.

These systems involve several multimodal trust aspects like being transparant, keeping ethical standards based on shared values, and acting like a trustworthy and reliable trustee (the trust receiver, the entity being trusted or distrusted) for the trustor (the trust giver, the entity that trusts or distrusts).

Figure 5. Schoppen, 2022

The concept of (social, sustainable and financial) trust involves a number of factors, including:

Confidence: The belief (a psychological state of certainty and self-assurance) in another agent's (a living or nonliving entity) ability and predictability, influencing trust and decision-making processes in individuals.

Credibility: The perceived trustworthiness and reliability of information or individuals based on competence, integrity, and consistency;

Reliability: The consistent and dependable quality or performance (ensuring consistent results) of information, individuals, or (process) systems fostering trust;

Trustworthiness: This is the other agent's reputation for being honest and reliable;

Security: The feeling (emotional state) of safety and protection in a relationship with an agent;

Transparency: The other agent's willingness to share information with us and be open about their intentions;

Integrity: The other agent's commitment to behave ethically based on values or moral standards, even when it is difficult;

Vulnerability:The willingness to open ourselves up to another agent and risk being hurt;

Uncertainty:The cognitive state characterized by a lack of confidence or predictability in outcomes.

Ecological perspective

Societal trust, in an ecological context, refers to the confidence and cooperation among individuals, communities, and governing bodies in addressing environmental challenges and promoting sustainable practices. It involves the belief in the collective ability to work towards environmental conservation, resource management, and the mitigation of ecological threats. Societal trust in this context facilitates collaborative efforts to protect natural resources, reduce environmental degradation, and foster the sustainable well-being of both human societies and the natural world.

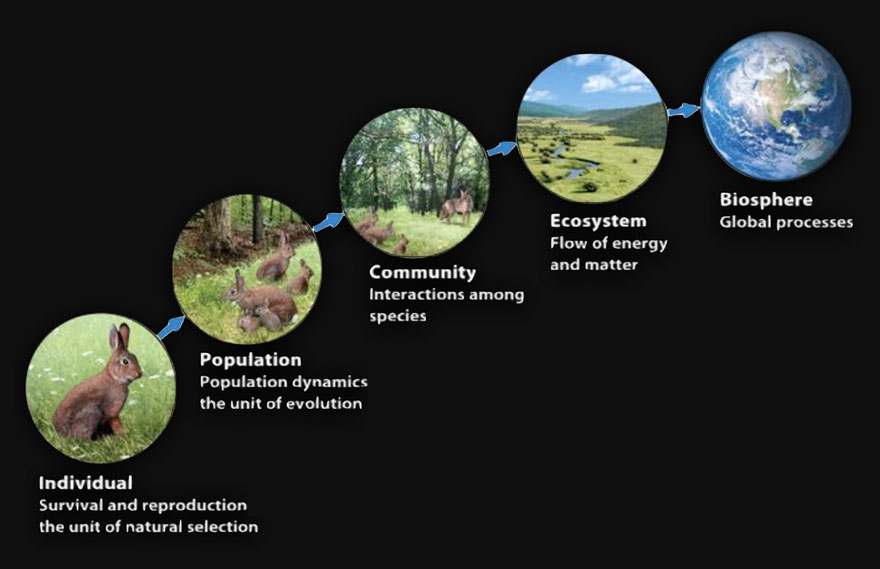

The levels in the framework are derived from the Hierarchical Organization of Ecology.7

Ecology is the scientific study of interactions among organisms and between organisms and their surroundings and environment.

Ecology teaches us how network patterns occur when life interacts between species within a community and their environment.

Figure 6. From publication Environmental Science for AP, Second Edition, 2015

Levels of Organization:

Individual: One Organism (A)

Population: Group of individuals of the same species (AAA);

Community: Different populations living in an area (AAA+BBB+CCC);

Ecosystem: Communities and non-living components (Biotic & Abiotic);

Biome: Ecosystems with similar climate and communities;

Biosphere:The air, land, and water on Earth where life can exists.

7. Haber, W. (2004). The ecosystem–Power of a metaphysical construct. Landschaftsökologie in Forschung, Planung und Anwendung. Friedrich Duhme zum Gedenken.[Schriftenreihe] Landschaftsökologie, Weihenstephan, 13, 25-48.

Odum, E. P., & Barrett, G. W. (1971). Fundamentals of ecology (Vol. 3, p. 5). Philadelphia: Saunders.

Trust level

'ALL' - Societal Trust

Societal trust refers to the trust of an individual in a group of individuals, or in 'the people' as a collective whole, including the socioeconomic systems in which society has sustainably organized itself - up to a global level.

Trust in Society in Times of Uncertainty

The most complex level at which trust plays a role in the framework is the socioeconomic level.

Socioeconomic trust can be broken down into two main concepts; trust in society and trust in economics.

Societal trust can be defined as the subjective attitudes and confidence of an individual in a group of individuals (Ho et al., 2019)1,

or in ‘the people’ as a collective whole, including the systems in which society has sustainably organized itself.

Societal trust has also a positive effect on financial trust (Dudley & Zhang, 2016)2.

Hence, trust plays a crucial role in the economy – it increases the efficiency of economic exchanges. Kenneth Arrow (1972, 1974, p. 23)3

defined trust as a ‘lubricant for social systems’ and ‘much of the economic backwardness in the world can be explained by the lack of mutual confidence.’ Zak and Knack (2001)4

showed that high-trust countries grow faster than low-trust countries.

In times of uncertainty, when socio-economic systems are under pressure, trust in (social) sustainability and complying with rules are becoming increasingly important. This can be climate-related environmental changes that influence human societies on an ecological, political or financial scale (Warner et al., 2010).5

Also other crisis as food and energy shortages or pandemics are leading to physical dangers and financial risks. These are all events with impact on environmentally dependent socio-economic systems.

1. Ho, K. C., Yen, H. P., Gu, Y., & Shi, L. (2019). Does societal trust make firms more trustworthy?. Emerging Markets Review, 100674.

2. Dudley, E., & Zhang, N. (2016). Trust and corporate cash holdings. Journal of Corporate Finance, 41, 363-387.

3. Arrow, K. J. (1972). Economic welfare and the allocation of resources for invention. In Readings in industrial economics (pp. 219-236). Palgrave, London.; Arrow, K. J. (1974). The limits of organization. WW Norton & Company.

4. Zak, P. J., & Knack, S. (2001). Trust and growth. The economic journal, 111(470), 295-321.

5. Warner, K., Hamza, M., Oliver-Smith, A., Renaud, F., & Julca, A. (2010). Climate change, environmental degradation and migration. Natural Hazards, 55(3), 689-715.

Trust on a Micro-Society Scale

Image: Schoppen, 2023

Largest field study into festival trust worldwide

In 2023, Erik Schoppen and his team conducted a large-scale, exploratory field study into festival trust at the Lowlands music festival (The Netherlands).6

Main research question: Do music festivals (as a temporarily micro-society) contribute to increased trust in society?

At the festival, with over 65.000 festival goers, visitors could share their trust in the 'Gate of Trust & Love' and could walk a 'Trust Trail' with reaction time experiments.

Besides the gate, a large installation with specially developed (scanning) software to measure trust at an implicit level - by spreading your arms less or more [n=8207], visitors could also participate in nine short click-surveys with their own smartphones that measured sustainable trust attitudes on a cognitive, emotional and behavioral level in real time [n=684].

This was done over three days in a music festival setting at 10 different contact measurement points on the site [click 'next slide' above to view a result overview]. All data was aggregated realtime into one general trust scale (average 8,4 on a scale of 10), which was displayed on a large trust meter at the entrance to the festival, easily visible to the public.

Read more about the study (Dutch) at www.festivaltrust.org.

6. The on-site research was done in collaboration with the

National Science Agenda / NWO

Impact of Brand Trust on Society

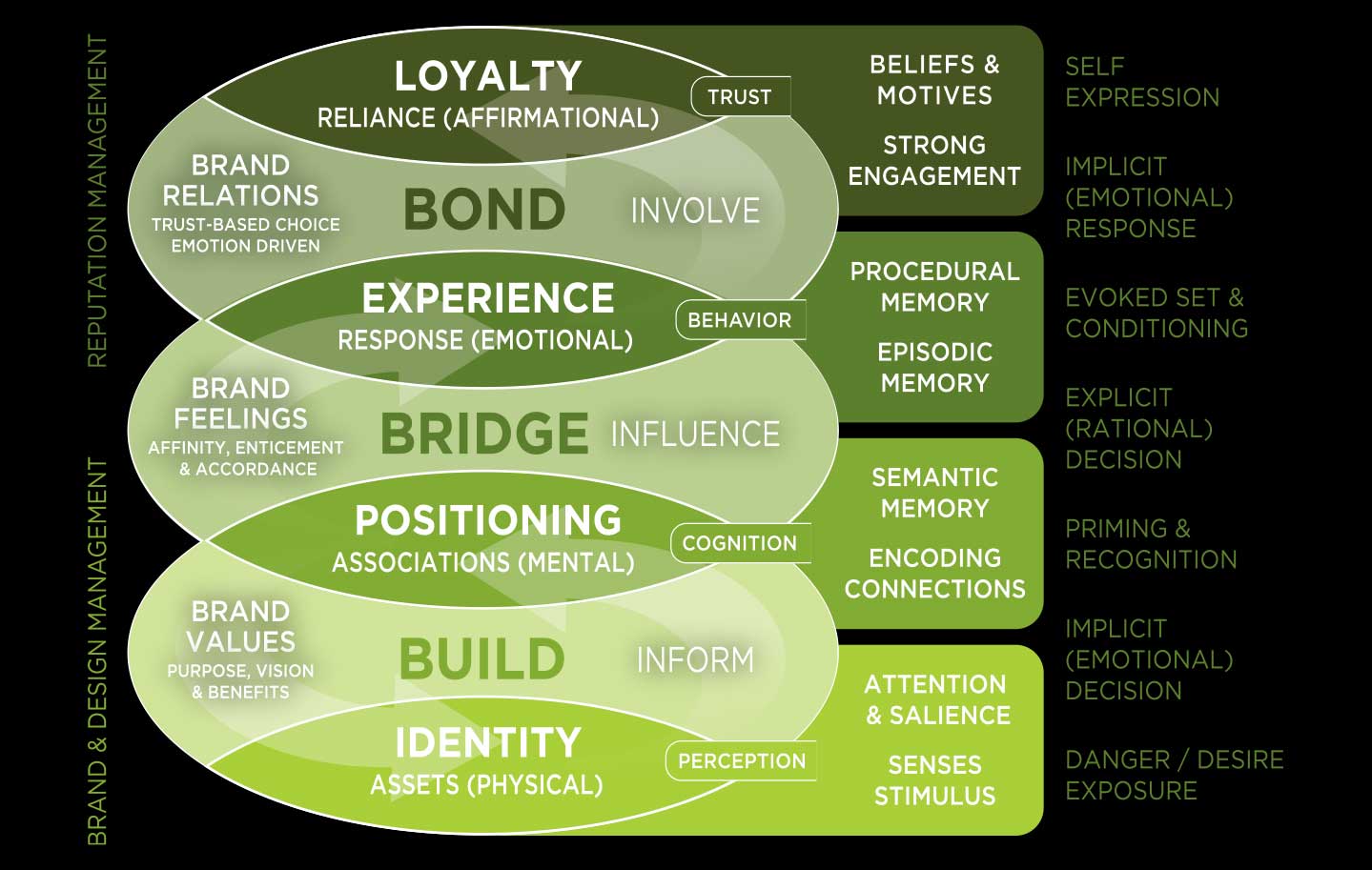

Image: Schoppen, 2017

Impact with your brand on people, markets and society

In 2017 he published an article that discussed brand management as an indispensable component in developing a sustainable business strategy.

In addition to knowledge regarding sustainable leadership, trust and behavioral change, it also provides insight into human decision-making behavior, emotions, motives and driving forces.

‘Human-centred’ innovation and brand policy can lead to greater awareness and involvement, stronger brand relationships and a more robust brand trust. This leads to a stronger, independent and more prosocial brand, and a sustainably higher brand equity; organisational capital that in turn can contribute to responsible and future-oriented innovation.

The publication (Schoppen, 2017)7 deals with mental brand policy from a macro-economic, a brand strategic and a neuroscientific perspective.

With the methodology organisations can make an impact with their brand(s) on people, markets and society.

The method is applicable to issues involving strategic brand and design management in which brand perception, brand experience, brand interaction and brand trust play an integral role.

7. Schoppen, H. S. (2017). The Impact of Brands on People, Markets and Society: Build Bridge Bond Method for Sustainable Brand Leadership. H.S.Schoppen.

Download Publication

Trust Level

'THEM' - (Eco)System Trust

The confidence in a social, economical, or political system, including institutional, technological, ecological, or societal systems. It involves the functionality and reliability of larger anonymous systems that individuals interact with.

We trust systems (in most cases), but do systems trust us?

Biological/ecological/planetary system studies are import for the continuity of our life on this planet. But on this platform, we focus on trust systems, and the role of organisations and institutions that use new technology, like AI.

Besides the natural existential systems where we depend on to survive, system trust also refers to our trust in economic, societal, political or other institutionalized systems, such as ‘the banking system’, ‘the stock market’, ‘democracy’ or the 'courts’.

At the system level, trust is no longer directed at an individual or organization with a clear identity, but rather as a fuzzy, all-encompassing concept. Here, trust can be used as a [heuristic] mechanism that reduces [social] complexity and enhances cooperation in trust systems, such as political or financial systems (Luhmann, 1979, 1984; Blomqvist, 1997; Jalava, 2003).1

When cooperation is successful in organizational openness and competitiveness while reducing social uncertainty and vulnerability, it leads to a higher institutional-based trust (Kramer & Tyler, 1996; Bachmann & Inkpen, 2011).2

Now, we entering a new era: our trust in 'artificial intelligence systems', which will play an existential role in people's lives, economy, and in our nature preservation and in our society.

System trust plays a crucial role in enabling individuals or organizations to engage with complex systems, ranging from natural ecosystems to online environments and AI.

It underpins the willingness to participate in online interactions and transactions, share personal sensitive information, and rely on system feedback [e.g. answers via prompts] or autonomous agent recommendations.

Building and maintaining system trust requires a multifaceted approach that addresses both technical and human (attitude) factors.

Within a system, trust by humans can be a result of decision processes based on acquired knowledge [cognitive trust], positive expectations and outcomes [affective trust], and the actions and risks taken [conative trust].

The foundation of our framework, as discussed on this platform, is built upon these three psychological and neurological trust attitudes; cognitive, affective and conative (behavioral).

Machine trust, on the other hand, has no [biological] emotions, nor does it have mortality or morality (feeling emotional attachement or a conscious moral obligation to keep you safe).

Although a system can be (self-)programmed [as a trustor] to serve solely in the interest of the user [as a trustee], the question remains, does an autonomous agent system rely on your intentions if they conflict with other goals or could harm the public?

Trust Dilemmas

Although biological/ecological/planetary system studies are import for the continuity of our life on this planet, this level focusses on trust systems, and the role of AI in these systems.

We added two extra cases within this level where we discuss the role of trust (dilemmas) in the use of systems; one that shows the moral and ethical implications of using empathic algorithms with the risk of emotional [unpredictable/irrational] behavior, the other investigates system warranties by risk taking (uncertainty regarding the other AI-party’s motives, intentions and actions).

1. Luhmann, N. 11979) Trust and Power. Wiley, Chichester.; Blomqvist, K. (1997). The many faces of trust. Scandinavian journal of management, 13(3), 271-286.; Jalava, J. (2003). From norms to trust: The Luhmannian connections between trust and system. European journal of social theory, 6(2), 173-190.

2. Creed, W. D., Miles, R. E., Kramer, R. M., & Tyler, T. R. (1996). Trust in organizations. Trust in organizations: Frontiers of theory and research, 16, 38.; Bachmann, R., & Inkpen, A. C. (2011). Understanding institutional-based trust building processes in inter-organizational relationships. Organization Studies, 32(2), 281-301.

AI-System Trust Interdependency

Image: Schoppen (artist impression), actual screendump Bard, 2023

Does AI uses the same Trust Heuristics ar Humans do?

As users interact with AI-systems, they form perceptions of the system's trustworthiness [human trust], which in turn influences their willingness to engage with the system and to rely on its decisions [machine trust].3

Conversely, the system's behavior and performance can also affect user trust, leading to a 'feedback loop' that reinforces (or erodes) mutual trust [as discussed in the 'social risk disclosure gap' study at the right].

Establishing trust in AI-systems involves a reciprocal relationship where the AI's consistent performance and unbiased behavior builds user confidence, while user trust influences how the AI is perceived and utilized.4

Current AI models are using human based (and therefore often biased) data, so it also copies the same human (lingual and perceptual) biases [click 'next slide' above to view a research example].

That the LLM (Large Language Model) sticks to the wrong answer, even providing a correct one, has to do that when a LLM is using a fixed dataset, it cannot update its internal model - it wil give the same answer or start 'hallucinating' to provide 'reasonable' new answers.

In the coming years, autonomous AI systems will learn from its environment and itself, and respond to new information and situations through multimodal models, combining perception, cognitive reasoning, memory models, emotion (empathy), and (trust) behavior.

The ethical implications of AI interdependence, including potential unwanted consequences, necessitate ongoing interdisciplinary research to ensure the responsible development and integration of beneficial AI technologies, and in the near future artificial general intelligence (AGI), in a way that aligns with societal values, and psychological sociological principles of trust.

Therefore, we need a discussion about trust interdependency between biological (Brain) and artificial (AGI) systems. These are explained in the next alinea.

Biological and artificial trust systems

Biological lifeforms base their multimodal trust on available sensory information to give meaning to what is perceived as 'reality', so does AGI.

But they are different in nature. Although biological and artificial neural networks share the concept of interconnected nodes that process information, biological trust is based on electrochemical neural networks (nerve signals by action potentials & molecules that make neurons communicate with multiple synapses with each other evoking implicit and explicit feelings), however AGI is based on digital/electrical neural networks that communicate with several inputs and one output.

A deeper understanding of how these shared trust systems can 'work together in a form of symbiosis' will enhance our ability to develop more empathic and objective learning and reasoning AGI systems, contributing to a more social, sustainable, and safer world.5

3. Sundar, S. S., & Kim, J. (2019, May). Machine heuristic: When we trust computers more than humans with our personal information. In Proceedings of the 2019 CHI Conference on human factors in computing systems (pp. 1-9).

4. Schroeder, J., & Schroeder, M. (2018). Trusting in machines: How mode of interaction affects willingness to share personal information with machines.

5. This is one of the topics discussed at the Beneficial AGI Summit 2024. On the first two days of the BGI Summit, top thought leaders from around the globe will engage in comprehensive, detailed discussions of a wide range of questions regarding various approaches to AGI and their ethical, economic, psychological, political, environmental and other implications.

https://bgi24.ai

Trust Dilemma

image: Schoppen (ai-generated)

Do we trust biased/irrational AI-systems if they feel more human?

We intend to trust AI-systems if they act in a 'rational', predictable, preprogrammed way [like Asimov's three laws of robotics]. This is not how humans think, feel and act. And this is not what we want from 'empathic machines' or sensitive AI-agents who support our emotional needs. People are irrational and emotional, show empathy and react more instinctively, based on interoception and intuition. Just a hinge or an obscure feeling can trigger a human to act and do the right (or wrong) thing. We accept this kind of behavior by humans (this is what makes us 'human'), but from AI-systems we do not. But AI is not infallible. We have to accept 'wrong' choices or 'errors' within a system that acts in an intuit way (perceived from a human perspective). Think like a human fireman that makes a decision based on his intuition: something feels 'wrong' and he or she does the opposite of what a robot would do from rational analysis. If a human does something wrong we accept the mistake, but if a machine makes that same mistake it's an unforgivable error. Research show that people trust advice from humans more, even if the answer seems incorrect. But do we trust advice from an irrational machine?

Solving a moral AI-dilemma - with (going back to) option C

Do you know about the AI-self driving car that has to choose between killing the driver [option A] or killing pedestrians [option B]? Spoiler: it does not...

Based on my background in trust decision making, my hypothesis is, is that an autonomous AGI-system will simply take a step back [as humans do as a problem becomes to complex] and leave the final decision to the driver to avoid liability.

How does this work? Option A is the best solution for bystanders and insurance companies (as it limits the risk solely to the driver and passengers). Option B is favorite by car manufacturers and car drivers, as it prioritises the safety of its customers over that of bystanders and other drivers.

Of course, a car manufacturer can programm their AI in biased way (to save the driver first, which would increases brand trust immensely), but this is an ethical no no.1

And for an AGI, making such an ethical decision without a user consent would not be acceptable or trusted.

Therefore, in case of a dangerous situation or a possible collision, the most acceptable decision [option C] is to stick to the status quo where everyone already agrees on: the driver is responsible, following existing traffic laws and regulations.

Simply put: the AI will put the driver back into the drivers seat with a warning signal at the moment AI detects a possbile collision, allowing the driver to make the final decision. However, this introduces challenges related to reaction time and the potential for human error.

By giving a driver control over the vehicle [human override], it makes the decision human again (see accepted and trusted legislations).

Other options will still have ethical [trust] complications.2

For instance, the AI-system could introduce randomness to distribute the harm more evenly or reduce predictability [and therefore also reduce trust on both sides].

But this does not solve the liability and legislation discussion, and this approach may have its challenges in terms of public perception, acceptance and predictability. But it could avoid the moral burden of actively choosing one life over another.

Finally, the option to input a personal ethical preference into the autonomous vehicle system could help. But this approach raises concerns about potential misuse and the need for societal consensus on ethical standards [and also reduces trust].*

1. Freitas de, J. (2023), Will Consumers Buy ‘Selfish’ Self-Driving Cars?, Wall Street Journal.

2. https://www.moralmachine.net

*This is a snippet from the upcoming book Trust Reset, that will be published in the end of this year.

Risk Taking in System Trust

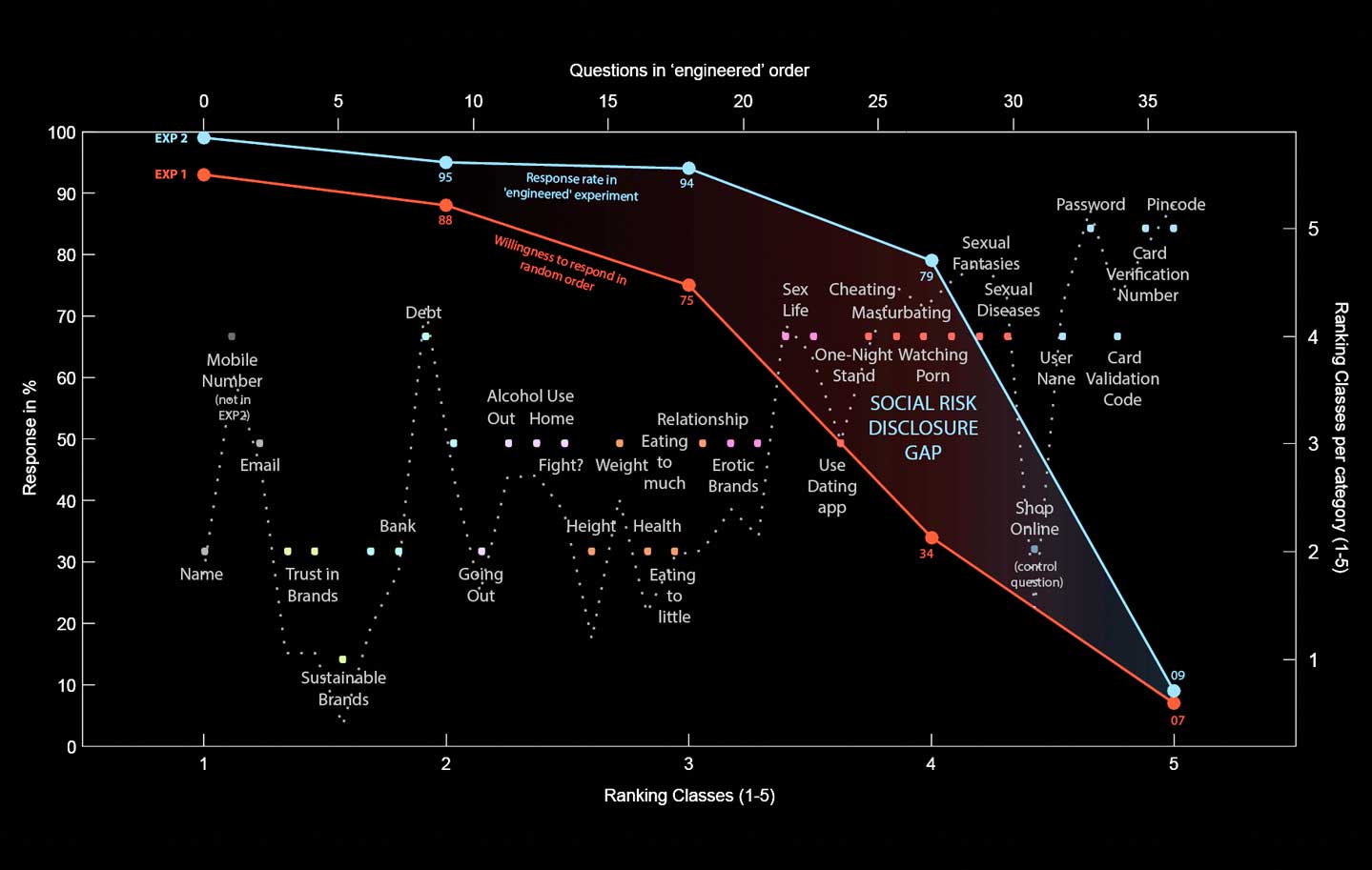

Image: Schoppen, Jolij, 2015

Increased system trust leads to more sensitive data sharing

Are users willing to share more personal information as a system gradually becomes more intimate with them?

In most cases they do, we discovered in a 'controversial' study.6

We found that participants are disclosing more personal sensitive information when questions are getting gradually more privacy-sensitive during a questionnaire, in contrast to a survey with the same questions in random order.

This gradual progression [as a compliance strategy], creates a sense of trust between the participant and the system (in our case a survey administrator), establishing a comfort level that may not have been achieved if the most intimate questions were asked immediately.

Additionally, as the questions become more intimate gradually, participants might adjust their perceptions of what is considered sensitive, leading them to be more willing to share personal information.

The strongest effect we found, were in questions regarding sexual preferences, in most cases kept private for the outside world. A breach of confidence with this higly sensitive information can cause a risk in building and maintaining social status and social relationships.

We call this effect, this gap, the ‘social risk disclosure gap’.7

6. The research (study 1) was done after approval of the ethical commission, informed consent, and ensuring participant well-being - a priority in research involving privacy-sensitive information.

In the consent we were transparent about the nature of the study (but not that we were researching risk-taking) and we provided participants with the option to decline or skip any questions they are uncomfortable answering.

When replicating (study 2) this study their was a complaint, and the ethical commission ordered to stop analysing and to delete all data. The graphic shown above is from the first study. But feel free to replicate, I'm more than happy to send you the research setup.

7. Schoppen, H.S., Social Risk Disclosure Gap

Trust Challenges

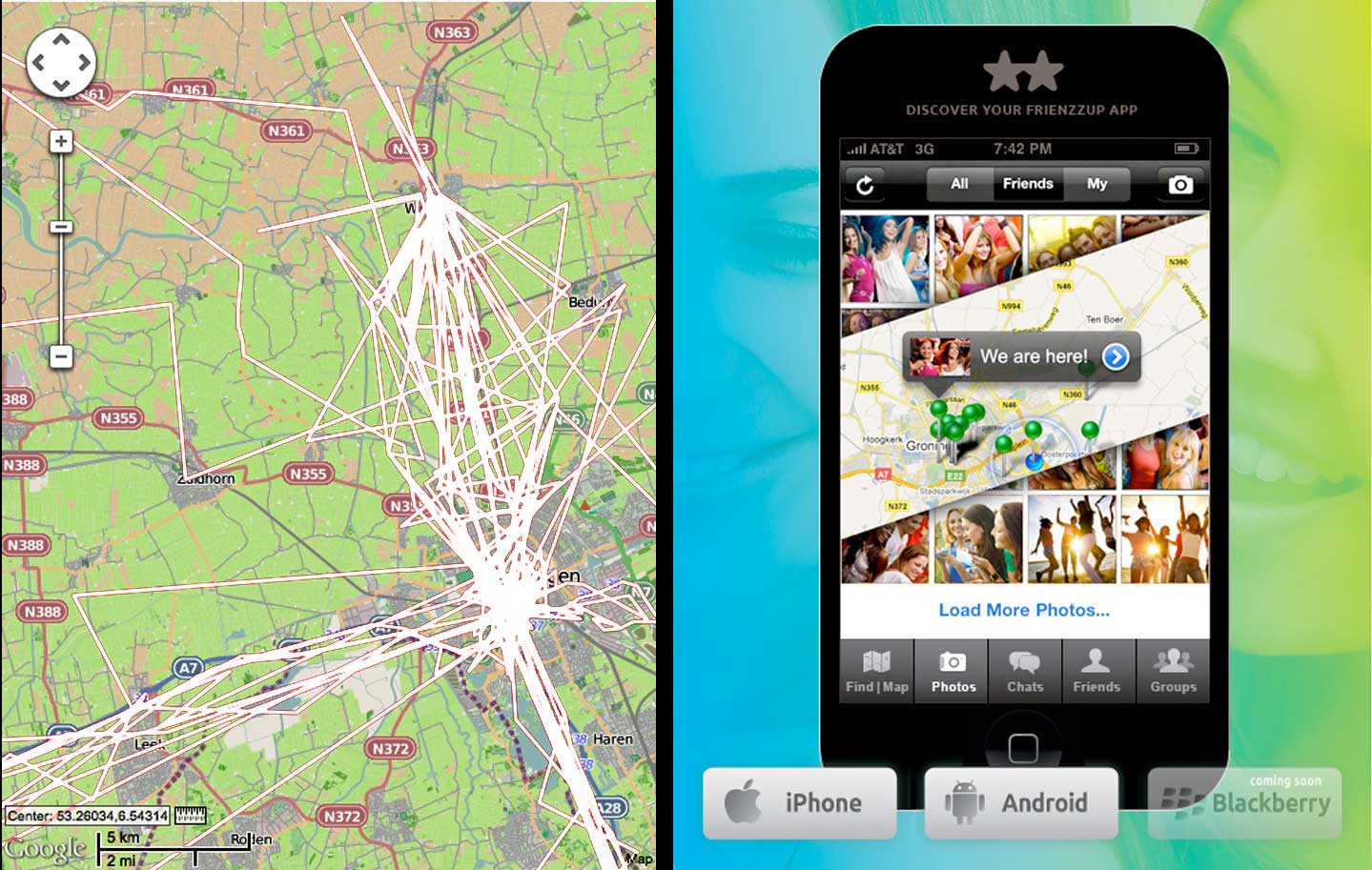

image: Schoppen, Frienzzup 2012

From 'Warm Human' to 'Cold System' levels

In 2010, I was the inventor and co-founder of Frienzzup (see image above) - a popular social media app that combined the most popular features at that time: (group)chat - before Whatsapp, instant photo sharing - before Instagram, and a Friend Fiender - using locations on the map. But we lost it to the immense success of Whatsapp.

In those days we had ethical discussions; should we use data stored in our systems to predict interpersonal relations and/or user behavior before our users would know this themselves (see also my GarbagePark.com project in the

Organisational Trust Level).

Think of combining datasets for connecting people based on their shared history or for future modeling. We reached an ethical treshold; we would not store user information 'for ever' but only if a registred user was using our services. and after a sleeping period of a year we would (after notification) delete all personal retrievable data. And if you deleted your account, we would immediately delete all your user data - to avoid abuse through possible hacks.

We thought in the time this was the right ethical thing to do to protect our users for future abuse. This is still not common practice for most online social media platforms today.

The image above shows the visible 'human level', where user interactions were visible [as shown in the GUI in the right of the image], and underneed the hidden 'system level', where unnoticeable pattern recognition - by data tracking and tracing (collection process), and profiled data storage and retrieval - takes place [as shown in the left trace data of the image].

To build and maintain trust in all network levels, it is crucial to be transparant in all levels in the framework.

From social/organisational trust to decentralised (system) trust

In the past, trust was primarily based on personal relationships and individuals' ability to maintain integrity and uphold [social and contractual] commitments. However, as society became more complex and the need for efficiency grew, there was a move toward a more 'objective' form of trust, relying on systems, procedures, and quantifiable data. Also the rise of administration, machines, and automation provided a solution, introducing a new type of trust: not in people, but in systems, procedures, and numbers. To regulate this new type of trust, this was anchored in centralized institutions like banks, governments, and corporations. These entities establish trust through brand recognition, established reputations, and legal frameworks. For example, the freedom of workers was restricted and replaced by procedures and protocols based on reports and statistics. However, because it was still people who carried out the procedures and operated the systems, people and trust remained central. Now this is replaced by decentralised system trust. As a result, a 'dehumanized' society is emerging, driven by non-human institutions and their computer systems that apply impersonal standard rules. A society that no longer functions on the basis of biological trust [human trust], but on the basis of mechanical evidence [machine trust]. So, are we willing to go from personal trust based information sharing to data sharing in anonymous AI systems? What if this the future? How do we bridge that trust gap?

Building trust throughout all network levels with AGI

What could help to bridge this gap are AGI (Artificial General Intelligence), DAOs and blockchain technology. DAOs are decentralized autonomous organizations governed by smart contracts on a blockchain.

At its core, blockchain is a distributed ledger technology using smart contracts enforcing pre-defined rules, eliminating human bias and manipulation.

Transactions are recorded on interconnected computers (nodes) across a network, instead of a single central server.

This creates a system where all mutations and decisions are recorded as smart contracts in an collectively owned system, fostering transparency, fairness, belonging and accountability.

This AGI can build trust in all levels, starting from the psychological and neurological perspective.

For example AGI can enhance fairness. Transparency activates the prefrontal cortex, linked to trust evaluation and fair decision-making.

Or evoke belonging. Collective ownership engages reward pathways in the ventral striatum, promoting feelings of connection and trust.

Finally, AGI can create self-confidence. Code-driven rules reduce amygdala activity, associated with fear and uncertainty, by providing predictable outcomes.

The integration of AGI with DAOs and blockchain could further revolutionize trust.

AGI could analyze data and propose beneficial, fair and efficient solutions for DAO governance, fostering trust in decision-making.

AGI could also tailor trust mechanisms to individual members based on their past interactions and risk profiles.

These mechanisms can also be used to tailer trust to individual members (Personalized Trust Models), based on their past interactions and risk profiles.

So, AGI offers some great future advantages, but ethical considerations still arise.

Ensuring fairness and mitigating bias in AI-powered trust mechanisms will be crucial for adaptation.

And maintaining human oversight and control over AGI applications (and in DAOs) is vital for succes.

Without transparency and explainability of AI-driven decisions users will not trust AGI-systems.*

*This is a snippet from the upcoming book Trust Reset, that will be published in the end of this year.

Trust Level

'US' - Organisational Trust

The trust we have in an entity with a clear individual identity, such as a company, institution, or any organized group or community with a defined structure, consistent, competent and human in behavior, and having like-minded values.

Organisational trust starts as a conscious and cognitive decision-making process

Organizational trust refers to the trust one has in organizations with a clear identity, such as a brand. It differs from social trust because organizational trust no longer deals with trusting named individuals. Trust plays an important role in organizational life as it facilitates information exchange and contributes to sustainable trust relationships.

To build trust relationships within an organization, confidence of the trustor in people or in the organization that they represent is required. People will trust organizations when they operate from a clear individual identity and have a specific societal role - like (local) governments, companies or NGOs (Hitch, 2012).1

Consistent with this approach, Zaheer, McEvily & Perrone (1998)2 argue that trust originates on an individual level, even if individuals belong to a group or larger organization.

There should be a generally accepted belief of organizational commitment, which will result in an increased self-esteem and involvement (Mowday, 1998).3

The organization, including all its values, functions as a point of reference.

The decision to trust an organization is a complex one: contrary to when evaluating trustworthiness of another person, an individual cannot directly evaluate affective or conative (behavioral aspects) of an organization. Therefore, evaluation largely takes place on a conscious, cognitive level: can we trust this organization based on what we know of it, and how it deals with the issues and problems at hand?

As a result, higher levels of organization are at a disadvantage in gaining trust via all three routes for building trust (affect, behavior and cognition). This means that organizations need to be very careful in their communication in order to gain and keep [brand] trust.

1. Hitch, C. (2012). How to build trust in an organization. London: UNC Executive Development.

2. Zaheer, A., McEvily, B., & Perrone, V. (1998). Does trust matter? Exploring the effects of interorganizational and interpersonal trust on performance. Organization science, 9(2), 141-159.

3. Mowday, R. T. (1998). Reflections on the study and relevance of organizational commitment. Human resource management review, 8(4), 387-401.

Sustainable Brand Trust & Value

Image: Book Cover Schoppen, BVC Schoppen, Heijenga, 2022

Building future-proof brand equity in a sustainably connected world

Building, measuring and managing future-proof brand equity in a sustainably connected world, that's the aim of this bestselling standard in the field of future-oriented and sustainable branding Strategic Brand Management, Benelelux 5th Edition, 2022 [Dutch title 'Strategisch merkenmanagement', also known as the 'SMM5'].3

The fifth edition is fully focused on sustainable brand building, on an economic, social and ecological level.

The book [by Keller, Schoppen, Heijenga and Swaminathan, all acclaimed brand research experts] covers the entire field and provides insight into the everyday practice of (digital, social & sustainable) branding through the many models, step-by-step plans, cases and examples. Strategic brand management is the standard work for (future) managers, directors, brand developers, brand managers and marketers and an indispensable title within higher (economic) education. Research shows that brand strategy is one of the 'most vital strategic capabilities' to sustainably bind customers and other stakeholders to brand organizations.

In today's economy, where economic, ecological and social values are becoming equally important, cooperation between organizations and people and how we deal with natural resources and the environment becomes essential.

Therefore, the authors Schoppen and Heijenga introduced the Brand Value Compass.4 This is a framework of knowledge domains and impact factors to develop sustainable strategies and ecosystems for multiple brand value creation [click 'next slide' above to view the compass].

For each quadrant, the compass offers opportunities to create sustainable brand added value and trust.

Read more about this publication (Dutch) at www.strategischmerkenmanagement.nl.

3. Keller, K.L., Heijenga, R., Schoppen, E., & Swaminathan, V. (2022). Strategisch merkenmanagement, Pearson Education. Info

www.SMM5.nl

4. Schoppen, H.S., Heijenga, R. (2022) Brand Value Compass, A Holistic Approach for creating multiple brand value(s).

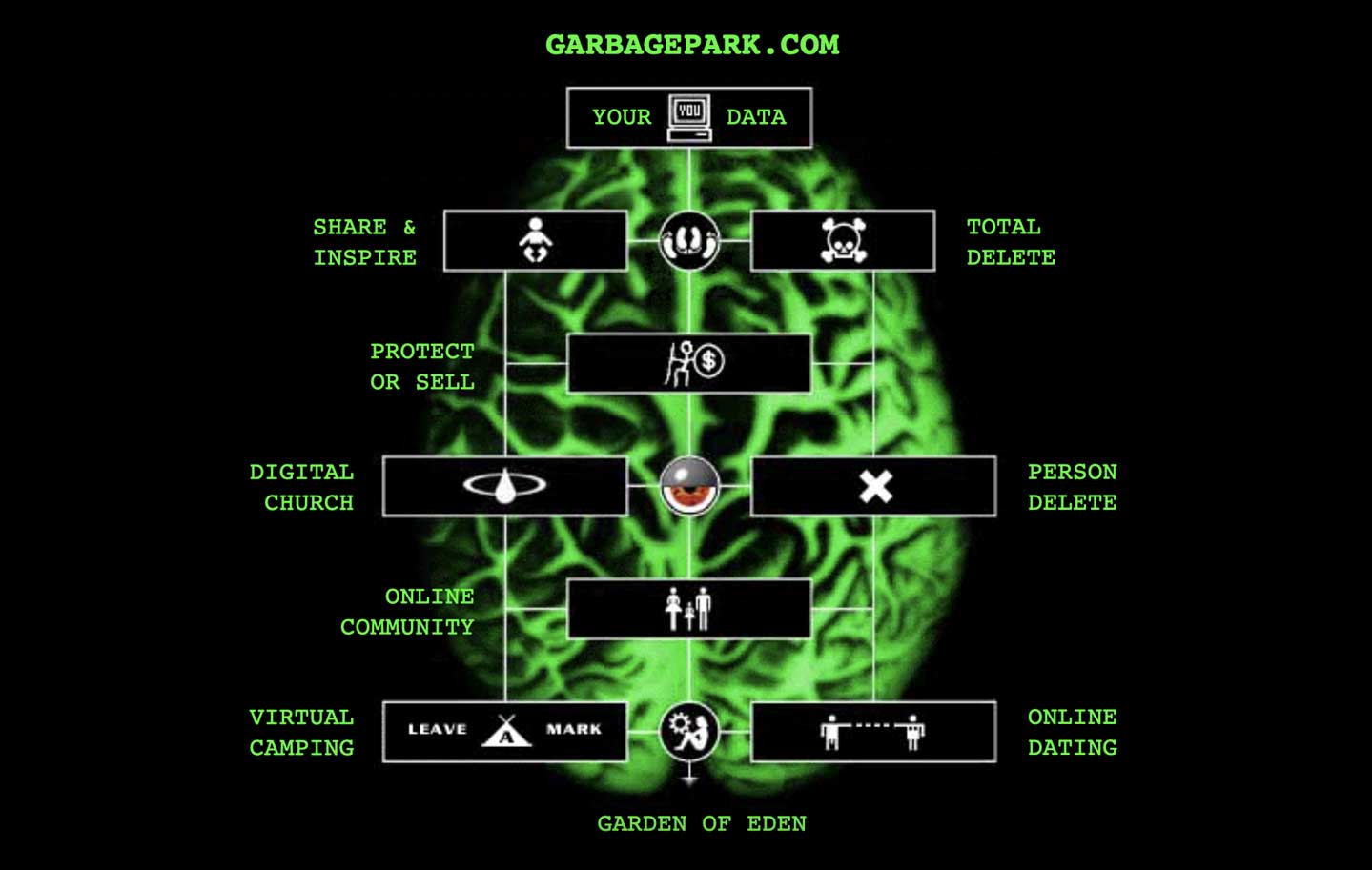

Trust Ethics in Virtual Communities

Image: Schoppen, 1996

The World as a Virtual Park, Leaving Traces, Linking the Unknown

Garbage Park was a pioneering and groundbreaking 'online privacy awareness' and ethical trust behavior project, created bij Erik Schoppen, and first published on the internet in 1996

[Concept/design: Erik Schoppen. Key-programming: Peter Sloots, '96-'97].4

The concept of the website was as simple as effective, people that visited the site left traces ('digital garbage') behind in a virtual web park. The traces of their online behavior were visible for every other visitor. But, when viewing the information of another user, an email was sent to that person with their own trace-information. Although the website was meant to run for two years, after only 5 days the site had to be put offline through overwhelming e-mail traffic and shocked reactions, and the experiment had to be stopped.

Garbage Park was way ahead of its time due to its interactive 'brain-park' interface and the many novel concepts it offered.

So showed Garbage Park a profile page with all the information about the user, gathered and stored over time

(a sort of proto-profile and timeline page, as used in social media many years later - like Facebook or LinkedIn).

Garbage Park had - as far as we know - the first single buttoned web-interface in the world.

The webpage was the first datingsite with only a bee and a flower. The database-engine behind the page was

mixing and matching people based on their profile (their traces) available on the internet.

Garbage Park had also the quietest place on the Net. A webpage called ‘The Garden of Eden’ could be visited by only one visitor at the time. This meant that it was possible to take up virtual space in the park. Other visitors, who had to wait their turn, could see who occupied the page and for how long.

Information about the project: www.garbagepark.com.

4. Schoppen, H.S. (1997) Garbage Park - A Comment on media, privacy and communication on the world wide web.; Erik Schoppen pioneered since 1994 with online behavior, ethics, trust and privacy with his Privacy Awareness & Behavioral Research Projects as GarbagePark.com.

Trust Level

'WE' - Cooperative Trust

This refers to the mutual confidence and reliance that individuals, organizations, or societal groups have in each other when engaged in collaborative or cooperative endeavors or partnerships to achieve common or non-common goals.

Attitude and Behavioral Change starts with Reciprocal Human Connections

Social trust is about the trust between individuals: the trust we have in our immediate social environment and actors within that environment. Within this level, trust can be seen as “a psychological state comprising the intention to accept vulnerability based upon the positive expectations of the intentions or behavior of another” (Rousseau et al., 1998).1

This is important in cooperative trust, when collaboration is needed between people to achieve common goals. For example, neighbors working together on organizing sustainable initiatives for the local community. In most cases, social trust leads to the default expectation of other people’s trustworthiness (Rotter, 1980),2

which Rempel et al. (1985)3

termed in their trust measurement as predictability.

Predictability in interpersonal trust enables people to take risk and to deal with uncertainty, as they feel that others act consistently and don’t take advantage of them (Porter, Lawler & Hackman, 1975).)4

Forecasting future actions also relies on knowledge about consistent behavior in the past. This is not only important for couples or groups, but also for managers and professionals in organizations (Mintzberg, 1973)5

as interpersonal trust has cognitive [knowledge about others] and affective [feelings of trust] foundations (Lewis & Weigert, 1985a, b).6

Available knowledge and positive feelings serve as a [behavioral intentional] platform from which people make leaps of faith (Luhman, 1979; Simmel, 1964).7

Trust facilitates the willingness to invest in affective relationships over a longer period of time (Engle-Warnick & Slonim, 2006).8

Trust can therefore be seen as the long-term motivation to maintain relationships (Rempel, Holmes, & Zanna, 1985).9

This social consistency is valued most in social and business relationships in today’s organizations and societies, and is largely based on social similarities (Light, 1984).10

Hence, the importance of measuring the consistency and congruence of social norms and attitudes in interpersonal trust relationships.

1. Rousseau, D. M., Sitkin, S. B., Burt, R. S., & Camerer, C. (1998). Not so different after all: A cross-discipline view of trust. Academy of management review, 23(3), 393-404.

2. Rotter, J. B. (1980). Interpersonal trust, trustworthiness, and gullibility. American psychologist, 35(1),

3. Rempel, J. K., Holmes, J. G., & Zanna, M. P. (1985). Trust in close relationships. Journal of personality and social psychology, 49(1), 95.

4. Porter, L. W., Lawler, E. E., & Hackman, J. R. (1975). Behavior in organizations.

5. Mintzberg, H. (1973). Strategy-making in three modes. California management review, 16(2), 44-53.

6. Lewis, J. D., & Weigert, A. (1985). Trust as a social reality. Social forces, 63(4), 967-985.; Lewis, J. D., & Weigert, A. J. (1985). Social atomism, holism, and trust. The sociological quarterly, 26(4), 455-471.

7. Luhmann, N. 11979) Trust and Power. Wiley, Chichester.; Simmel, G. (1950). The sociology of georg simmel (Vol. 92892). Simon and Schuster.

8. Engle-Warnick, J., & Slonim, R. L. (2006). Inferring repeated-game strategies from actions: evidence from trust game experiments. Economic theory, 28(3), 603-632.

9. Rempel, J. K., Holmes, J. G., & Zanna, M. P. (1985). Trust in close relationships. Journal of personality and social psychology, 49(1), 95.

10. Zanna, M. P., & Rempel, J. K. (1988). Attitudes: A new look at an old concept. In D. Bar-Tal & A. Kruglanski (Eds.), The social psychology of knowledge (pp. 315–334). Cambridge, UK: Cambridge University Press.

From (People) Voting to (Earth) Voicing

Image: Schoppen, 2019; TEDx, 2021

A new system to rebuild trust in our sustainable future

In 2021 Erik Schoppen gave a TED Talk4 in which he proposed a new system to rebuild trust in our sustainable future [click 'next slide' above for the concept derived from a study that investigated the trust attitudes per level].5

In his talk 'A new system to rebuild trust in our sustainable future', he introduced the idea of an 'Earth Voicing System' to restore trust in a social, sustainable future for new generations. "There is a need for a system with a people-planet approach. That uses our existing political electoral systems, but also takes into account a planetary voting system. So that we can make future-proof decisions that do not have a negative impact on the world. Therefore, we not only give every person a voice, but we also give a voice to all life ecosystems on this planet."

Schopppen: "Right now, we are at a point in the climate crisis where we have to make a choice whether we follow leaders who do not think cross-generational. Time is running out on our planet and many young people feel they have no voice. They want to express that their existential expectation is based on their trust in a sustainable future. We need cross-generational decision making. Therefore, we need a system with a people-planet approach. That uses our existing voting system, but incorporates a planetary voicing system too, to make future proof decisions that have no negative impact on the world. We not only give every human a voice, but give all life ecosystems on this planet a voice as well."

View TEDx Talk.

4. Schoppen, H.S. (2021). A new system to rebuild trust in our sustainable future [video].

View on YouTube.

5. Schoppen. H.S. (2020) Trust across multiple levels of organization: An integrative framework to measure trust with the CAB-attitude model

Building, Bridging, Bonding

Image: Schoppen, 2017

Build Bridge Bond - Method for Sustainable Brand Leadership

The Build-Bridge-Bond® methodology for sustainable brand leadership is a scientifically substantiated management method for building strong mission-driven brands, brand trust and sustainable brand relationships. The method sees a brand as the (trust) relationship between the brand (organization) and its users, stakeholders and public. It is the total (holistic) brand experience (thoughts, feelings and behaviors) that a user (collectively or personally) associates with the brand, derived from consistent beliefs and congruent values. Essentially, the methodology is about connecting people and brands based on sustainable trust. With the methodology you can make an impact with your brand on people, markets and society.

The method was published as an article and in a book (Schoppen, 2017, Schoppen et al. 2015, 2022).5

The methodology shows from a neuroscientific perspective the bridging stages to come from vision and purposeful core values (that form the basis of brand identity) to trust-based brand relations by meaningful brand interaction (brand dialogue), and positive user experiences (customer brand experience) that build long-lasting brand loyalty (brand reputation). The aim is to form an emotional bond with the user, by rewarding loyal engagement and trust-based choices. This approach provides insight in the human perception, memory systems and decision-making processes.

Read more about the method (English/Dutch) at www.buildbridgebond.com.

5. Schoppen, H. S. (2017). The Impact of Brands on People, Markets and Society: Build Bridge Bond Method for Sustainable Brand Leadership. H.S.Schoppen.; Keller, K.L., Heijenga, R., Schoppen, E., & Swaminathan, V. (2022). Strategisch merkenmanagement 5,

Info www.BuildBridgeBond.com

www.StrategischMerkenManagement.nl

Download Publication

Trust Level

'ME' - Individual Trust

Self-confidence is about someone's capability and competence to cope with (new) situations. It is an internalized and individual characteristic, influenced by personal experiences, achievements, skills, and (positive) self-perception.

The essence of trust lies in self-belief, dependence, risktaking, expectations of predictable/positive outcomes and/or (mutual) reciprocity

Individual trust is about the trust a person has in one own’s capacities and abilities to cope with challenging situations. On a personal level (i.e., within the individual) trust is foremost associated with concepts as self-confidence and self-affirmation (e.g., Steele, 1988; Aronson, Cohen & Nail, 1999),1

although research recognizes several determinants of trust on an individual or micro level (Nooteboom & Six, 2003).2

So is individual trust also rooted on theories of self-efficacy, self-esteem, and locus of control. Self-efficacy refers to an individual's belief in their ability to succeed in specific tasks or situations, while self-esteem reflects an overall positive evaluation of oneself. Locus of control, on the other hand, captures the extent to which individuals believe they have control over their own lives, as opposed to external factors.

Individual trust is intricately linked to cognitive, emotional and conative (behavioral) processes.

Cognitive trust provide individuals with a strong sense of self-trust often have a realistic understanding of their strengths and weaknesses. This awareness contributes to a more accurate self-perception, fostering a stable foundation for trust in one's abilities.

Affective trust is strengthened by positive feedback, encouragement, and support from others can enhance an individual's confidence. Conversely, negative experiences or lack of support may erode self-trust.

A strong self-belief, shaped by experiences in childhood, adolescence, and adulthood, makes it easier to regulate emotions, which play a vital role in self-trust.

And conative trust is reinforced through behavioral feedback. When individuals set goals, take risks, and achieve positive outcomes, it enhances their confidence. On the other hand, setbacks and failures can challenge self-trust, requiring adaptive strategies to maintain or rebuild confidence.

Confidence, in addition, can be seen as the personal belief based on knowledge, experience or evidence (e.g., past performance), that certain future events will occur as expected (Earle, 2009).3

The stronger one’s self-belief, according to Rouse (2001),4 the stronger one’s independence, motivation and resilience, leading to a stronger sense of self-confidence even in challenging situations.

1. Steele, C. M. (1988). The psychology of self-affirmation: Sustaining the integrity of the self. In Advances in experimental social psychology (Vol. 21, pp. 261-302). Academic Press.; Aronson, J., Cohen, G., & Nail, P. R. (1999). Self-affirmation theory: An update and appraisal.; Cohen, A.R.; Stotland, E.; Wolfe, D.M. (1955). "An Experimental Investigation of Need for Cognition". Journal of Abnormal and Social Psychology. 51(2): 291–294, doi:10.1037/h0042761

2. Nooteboom, B., & Six, F. (Eds.). (2003). The trust process in organizations: Empirical studies of the determinants and the process of trust development. Edward Elgar Publishing.

3. Earle, T. C. (2009). Trust, confidence, and the 2008 global financial crisis. Risk Analysis: An International Journal, 29(6), 785-792.

4. Rouse, K. A. G. (2001). Resilient students' goals and motivation. Journal of adolescence, 24(4), 461-472.

Trust in a Social-Sustainable Future

Image: Schoppen, 2015; Schoppen, Jolij, Attitude Levels, 2019

Measuring trust attitudes over the levels of the trust framework

The term ‘trust in sustainability’ (Dutch: duurzaamheidsvertrouwen, Schoppen, 2012)5

can be described as the trust that people or stakeholders have in individuals, groups or organisations with sustainable intentions, or ones that have already manifested themselves in future-proof or sustainable manner.

Trust is built up by cognitive, affective and behavioral attitude components [CAB-model, see Trust Attitudes], which can vary per trust level.

To measuring these differences, we conducted a survey [n=90] in which respondents were asked to rate the amount of trust on the three attitudes for each of the five levels in the framework [scale 1-5].6

In the survey, we used the government and their communications on sustainability as an example.

[click 'next slide' above to view the trust attitudes per level]. The results showed that emotional involvement decreases when users have to interact on a system level.

Trust in sustainability and governments, NGO's, and other (non)commercial organisations or (system) institutions are becoming increasingly important in a social, ecologic and economic sense, since these 'agencies with influential power' are able to cause sustainable behavioral change more quickly with their laws, rules, services, products and exemplary behavior.

These agent entities must not only create welfare (or 'prosperity') for their stakeholders, but also improve the subjective wellbeing (or 'happiness') of people in society (in their neighbourhood or globally to solve the climate crisis).

Additionally to this, consumers are taking an increasingly critical stance towards (brand) organisations that manifest themselves as sustainable, since they do not know how to assess an organisation’s sustainable behavior. Crucial for the transition to a sustainable future economy.

5. Research (Doctoral Grant) funded by

Dutch Research Council (NWO)

6. Schoppen. H.S. (2020) Trust across multiple levels of organization: An integrative framework to measure trust with the CAB-attitude model

Measuring Personality & Trust Attitudes

Image: Schoppen, 2016

Individual expression of (personality-values based) attitudes

Besides attitudes, personality traits can be also a predictor of trust behavior. We express our personality and values through attitude based thinking, our emotions and our behavior [which can be collected by smartphones].

During his research Erik Schoppen wanted to be able to quickly and accurately link personality traits to his studies about brands and (brand) trust.

To do this he developed an implicit self-associative personality test.7

This is a short 4-minute reaction time task to measure unconscious association strengths between concepts based on the five-factor model (also called 'The Big Five').

Although there are already implicit association tests, he and his co-developers developed the fastest validated online personality test in the world, which works well on smartphones, tablets or PC. By making fast click-choices via the mobile phone's web browser, the test provides results in just a few minutes.

The test [free of charge for personal use] is available at www.personalitytraits.nl.

More larger-scale tests can be developed and carried out via our research platform www.resultal.nl.8

The platform offers many more options for research, like [visual or semantic] values-based IAT – online or on location.

No app install is needed.

With Resultal you have full access and full control of your own data and results. You get access to all our tools for surveys and studies on location, online or in the lab. You also have a limitless amount of experiments and studies to create (fair use policy).

7. Schoppen, H. S., Krook, L., Sloots, P., Jolij, J. (2016), From the inside lab to the outside world. Via www.worldbrainwave.com

8. Online psychological experiment and research platform www.resultal.nl

TRUST ATTITUDES

The 'deepest' levels of the framework encompassing three key components: cognitive, affective, and conative [behavioral] trust attitudes, arising from the human brain.

These biological and mental structures can be described as cognitive [reasoned], affective [emotional] and conative [behavioral] trust (Ebert, 2009; Weitzl, Weitzl & Berg, 2017)1

– as these components are based on a long history of attitude and trust research (e.g., Thomas & Znaniecki, 1918; Strong, 1925; Lewis & Weigert, 1985a, b; Schwarz & Bohner, 2001)2

and the three-component CAB-model (e.g., Rosenberg, 1960; Rempel & Zanna, 1988; Eagly & Chaiken, 1993; Hogg & Smith, 2007).3

The Cognitive-Affective-Behavioral (CAB) model explains how our thoughts (cognitive), feelings (affective), and actions (behavioral/conative) interact and influence each other.

The foundation of the framework is built upon these three psychological and neurological trust attitudes.

Besides the CAB-model, the stereotype content model (Cuddy, Fiske & Glick, 2008)4

also supports similar patterns of cognitive [competence/agency], emotional [warmth/communication] and behavioral reactions, including trust biases and attitudes.

1. Ebert, T. (2009). Trust as the key to loyalty in business-to-consumer exchanges (Vol. 1). Springer Fachmedien.; Weitzl, W., Weitzl, W., & Berg. (2017). Measuring Electronic Word-of-Mouth Effectiveness. Wiesbaden: Springer Gabler.

2. Nooteboom, B., & Six, F. (Eds.). (2003). The trust process in organizations: Empirical studies of the determinants and the process of trust development. Edward Elgar Publishing.;Strong, Jr., E. K. (1925) Theories of selling. Journal of Applied Psychology, 9, no. 1, 75.; Lewis, J. D., & Weigert, A. (1985). Trust as a social reality. Social forces, 63(4), 967-985.; Schwarz, N., & Bohner, G. (2001). The construction of attitudes. Blackwell handbook of social psychology: Intraindividual processes, 1, 436-457.

3. Rosenberg, M. J. (1960). Cognitive, affective, and behavioral components of attitudes. Attitude organization and change.; Rempel, J. K., Holmes, J. G., & Zanna, M. P. (1985). Trust in close relationships. Journal of personality and social psychology, 49(1), 95.; Eagly, A. H., & Chaiken, S. (1993). The psychology of attitudes. Harcourt Brace Jovanovich College Publishers.; Hogg, M. A., & Smith, J. R. (2007). Attitudes in social context: A social identity perspective. European Review of Social Psychology, 18(1), 89-131.

4. Cuddy, A. J., Fiske, S. T., & Glick, P. (2008). Warmth and competence as universal dimensions of social perception: The stereotype content model and the BIAS map. Advances in experimental social psychology, 40, 61-149.

Trust Attitude

Cognitive (Reasoned) Trust

Cognitive attitudes involve (explicit) rational thoughts, beliefs, and attributes associated with an object of trust. It is our belief in someone’s or something's reliability or integrity based on past experiences or on their reputation.

Foundation Framework

The cognitive component of trust attitudes involves our thoughts, beliefs, and ideas about something or someone. It represents our rational understanding and expectations about the behavior of the object of trust. Cognitive trust is often grounded in rational assessments and beliefs about the reliability and predictability of the trusted entity.

This section is still in progress, soon more...

Trust Attitude

Affective (Emotional) Trust

Affective attitudes are our feelings towards a subject. When it comes to trust, this might involve feelings of security when thinking about a particular person or a sense of fear or unease when dealing with an entity we don’t trust.

Foundation Framework

The affective component deals with our emotional responses and feelings towards something or someone. It involves our liking or disliking, attraction or aversion, and sense of satisfaction or dissatisfaction. Affective trust is based on emotional bonds and confidence in interactions driven by feelings that can be attributed to a specific entity.

This section is still in progress, soon more...

Trust Attitude

Conative (Behavioral) Trust

Conative attitudes involve our (implicit) actions and/or behavioral intentions towards a subject. In a trust context, this could be our willingness to rely on someone or to put ourselves in a vulnerable position because we trust them.

Foundation Framework

The conative component refers to our behavioral intentions or tendencies towards an object or issue of trust.

It involves our willingness to act in a specific way, like engaging with, supporting, or avoiding an object we trust. Therefore, conative (or behavioral) trust reflects individual behavioral tendencies and intentions based on someone's attitudes.

This section is still in progress, soon more...

Books & Articles

Publications

The Impact of Brands on People, Markets & Society

2017 | Sustainable Brand Leadership

The Build-Bridge-Bond® methodology is a framework to bridge the gap between brand positioning and user experience, and ultimately forging an emotional bond with consumers and stakeholders.

The BBB-methodology, published during his research into Trusting Sustainability, sees a sustainable brand as a holistic brand experience between brand and user, including all thoughts, feelings, and behaviors. This relationship hinges on consistent beliefs and congruent values, translating into sustainable trust and brand impact on people, markets, and society.

Read more about the method at www.buildbridgebond.com.

Or download publication (PDF) here.

Information Security Magazine, Conference Issue

2019 | Cybersecurity, Trust & AI

In 2019, over 750 international information security specialists gathered for the SecureLink cybersecurity conference 2019 in the Netherlands. In this Information Security issue an interview with Erik and a report of his keynote 'Cybersecurity & AI and the weakest link – people'.

Artificial neural networks, that continuously monitor our thoughts and behavior to recognise patterns, will become increasingly better able to predict and (un)consciously control our thinking and actions. In doing so, we shift part of our evolutionary biological trust to digital trust based on AI-systems. But by this trust interdependency, are we still in charge?

Read the interview and report (Dutch, PDF) here.

Strategic Brand Management, Benelelux 5th Edition

2022 | Sustainable Brand Management

Strategic Brand Management, Benelelux 5th Edition, 2022, is a comprehensive guide (670 pages) to building, measuring, and managing future-proof brand equity in a sustainable and interconnected world. The fifth edition focuses on sustainable brand building, addressing the economic, social, and ecological dimensions of brand value creation.

The book provides a complete overview of managing brand equity and (digital, social, and sustainable) branding. It serves as a standard reference for professionals, and an indispensable resource for higher (economic) education.

Read more about this publication (Dutch) at www.strategischmerkenmanagement.nl.

Evolution and Working of Trust inside and outside the Brain

2024 | Lectures/Masterclasses

In his lectures, Erik Schoppen shows the integral functioning of trust inside and outside the brain, and how to build future-proof trust to change behavior sustainably.

Discover how trust works in our brain. What are the functions of trust? Which ancient evolutionary mechanisms are behind trust, and how can you influence these? How does our brain distinguish biological and digital trust?

Read more at www.evolutionoftrust.com.

Rebuilding Trust in Complex Systems in a Divided World

2024 | Lectures/Masterclasses

Learn from trust systems from the past to (re)build trust in the future we all share.

This keynote not only discusses the antecedents of the current global trust crisis, but also on how to restore our social, organisational, technological and societal trust systems. Trust is essential for change, social cohesion and leadership. For example, we need trust in sustainability to solve the global climate crisis.

Read more at www.trustcrisis.com.

Gain and Retain Trust as a Confident and Trusted Leader

2024 | Masterclasses/Boardroom

In his Trust Masterclasses - which covers the human brain, our daily decisions and behaviors, trust and leadership - he shows how to build trust and deploy trust from a social, digital and sustainable (brand)management perspective. TRUST.MBA in One Day® is the only course that really dives into the Core of Trust and examines trust levels in organisations & sustainable leadership.

Read more at www.trust.mba.